- Products

- RouteEye – Real-time Vehicle Tracking System

- KalravAI – A Platform to Create Customizable AI Agents

- LeafAid AI – Your Smart Crop & Plant Health Companion

- StaffIRIS – GPS-based Real-time Monitoring of Field Staffs

- Koolanch – Self-service Data Integration Platform

- SkillAnything – eLearning Platform for Organizations

- KafkaView – Real-time Kafka Cluster Monitoring

- Solutions

- AI & ML

- IoT

- Security

- Data Engineering

- Company

+91 (988) 002 7443 | [email protected]

- Products

- RouteEye – Real-time Vehicle Tracking System

- KalravAI – A Platform to Create Customizable AI Agents

- LeafAid AI – Your Smart Crop & Plant Health Companion

- StaffIRIS – GPS-based Real-time Monitoring of Field Staffs

- Koolanch – Self-service Data Integration Platform

- SkillAnything – eLearning Platform for Organizations

- KafkaView – Real-time Kafka Cluster Monitoring

- Solutions

- AI & ML

- IoT

- Security

- Data Engineering

- Company

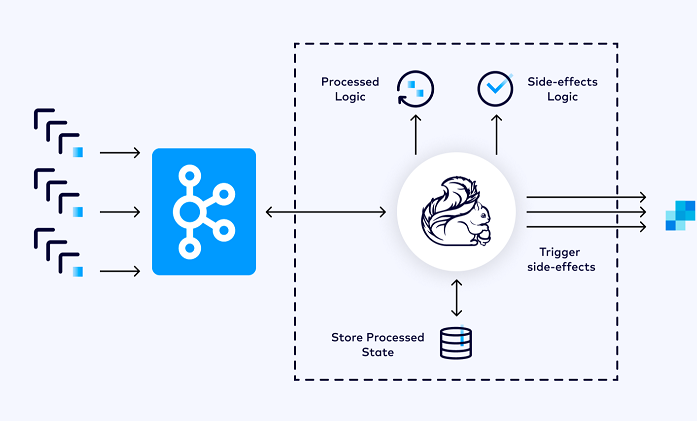

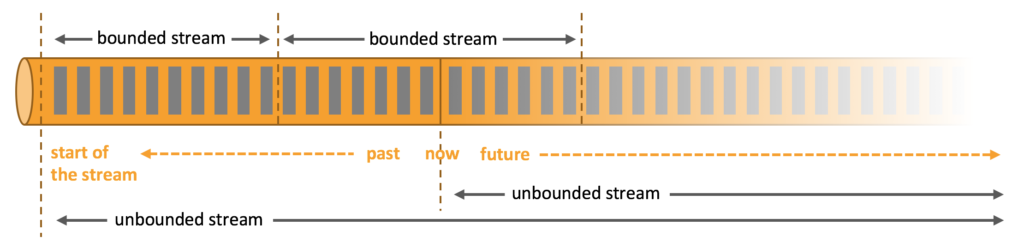

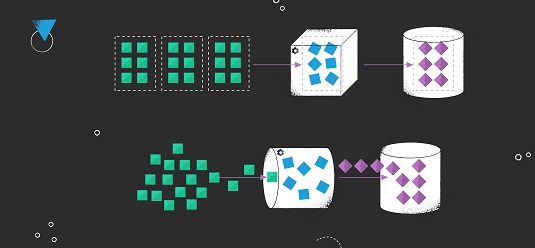

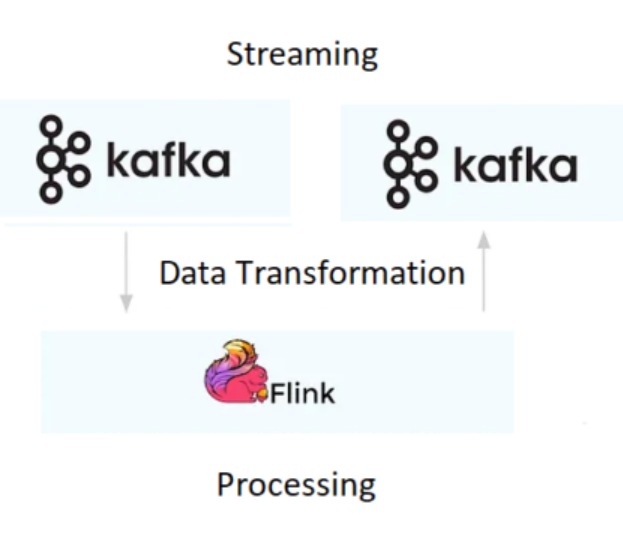

Process Streaming Data with Apache Flink

- Home

- Process Streaming Data with Apache Flink

© Copyright 2026. All Rights Reserved.